Difference between revisions of "Benchmarking of Table Structure Recognition Algorithms"

(Created page with 'Datasets -> Current Page {| style="width: 100%" |- | align="right" | {| |- | '''Created: '''2010-04-30 |- | {{Last updated}} |} |} =Description= The aim of this task is…') |

(→Description) |

||

| Line 15: | Line 15: | ||

=Description= | =Description= | ||

| + | [[Image:Table_UNLV_UW3_Results.png|300px|thumb|right|Example results obtained.]] | ||

| + | |||

The aim of this task is to benchmark different table spotting and table structure recognition algorithms. In the past, several algorithms have been developed for table recognition tasks and evaluated on different datasets [1-4]. Additionally different benchmarking measures have been used in the past by different researchers to evaluate the efficiency of their algorithms. | The aim of this task is to benchmark different table spotting and table structure recognition algorithms. In the past, several algorithms have been developed for table recognition tasks and evaluated on different datasets [1-4]. Additionally different benchmarking measures have been used in the past by different researchers to evaluate the efficiency of their algorithms. | ||

| Line 39: | Line 41: | ||

The evaluation software provided below (segment_evaluation.sh, part of the T-truth package) expects ground truth images in the "evaluation/gt" folder and result images in the "evaluation/result" folder. The evaluation algorithm produces a file with the extension ".out" in the evaluation directory for each level of abstraction (table, row, column etc) with semicolon separated benchmarking measures in the following order: | The evaluation software provided below (segment_evaluation.sh, part of the T-truth package) expects ground truth images in the "evaluation/gt" folder and result images in the "evaluation/result" folder. The evaluation algorithm produces a file with the extension ".out" in the evaluation directory for each level of abstraction (table, row, column etc) with semicolon separated benchmarking measures in the following order: | ||

| − | + | gt_img; result_img; GroundTruthComponents; SegmentationComponents; Number of OverSegmentations; Number of UnderSegmentations; Number of FalseAlarms ; OverSegmentedComponents; UnderSegmentedComponents; False Alarms; Missed; Correct; Partial Matches | |

These benchmarking measures are described in [5]. | These benchmarking measures are described in [5]. | ||

Revision as of 16:31, 6 July 2010

Datasets -> Current Page

|

Description

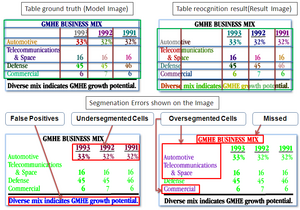

The aim of this task is to benchmark different table spotting and table structure recognition algorithms. In the past, several algorithms have been developed for table recognition tasks and evaluated on different datasets [1-4]. Additionally different benchmarking measures have been used in the past by different researchers to evaluate the efficiency of their algorithms.

This task comprises 427 images from the publicly available UNLV dataset. We provide the table structure groundtruth and word bounding box results for these document images. These images from the UNLV dataset along with the ground truth XML files and word bounding box OCR files can be used to generate pixel accurate ground truth image files as described in [5]. Appropriate tools to generate these ground truth image files are provided below.

The competing algorithms need to produce the output in the XML format similar to the sample ground truth. Five example output from our t-recs algorithm are included in the set of samples provided for reference. The output from these algorithms are then used to produce result image files which are then compared by the evaluation framework to produce following benchmarking measures:

1. Correct Detections 2. Partial Detections 3. Over Segmentation 4. Under Segmentation 5. Missed 6. False Positives

at the following levels of abstraction: 1. Table 2. Rows 3. Columns 4. Cells 5. Row spanning cells 6. Column spanning cells 7. Row/column spanning cells.

The evaluation software provided below (segment_evaluation.sh, part of the T-truth package) expects ground truth images in the "evaluation/gt" folder and result images in the "evaluation/result" folder. The evaluation algorithm produces a file with the extension ".out" in the evaluation directory for each level of abstraction (table, row, column etc) with semicolon separated benchmarking measures in the following order:

gt_img; result_img; GroundTruthComponents; SegmentationComponents; Number of OverSegmentations; Number of UnderSegmentations; Number of FalseAlarms ; OverSegmentedComponents; UnderSegmentedComponents; False Alarms; Missed; Correct; Partial Matches

These benchmarking measures are described in [5].

Related Dataset

Related Ground Truth Data

=Related Software

- T-truth Software and Samples (4.5 Mb)

References

- T. Kieninger and A. Dengel; Applying the T-RECS table recognition system to the business letter domain.

- J. Hu, R. Kashi, D. Lopresti, and G. Wilfong.Medium-independent table detection.

- S. Mandal, S. Chowdhury, A. Das, and B. Chanda; A simple and e

ective table detection system from document images

- B. Gatos, D. Danatsas, I. Pratikakis, and S. J. Perantonis; Automatic table detection in document images.

- Asif Shahab, Faisal Shafait, Thomas Kieninger and Andreas Dengel, "An Open Approach towards the benchmarking of table structure recognition systems", Proceedings of DAS’10, pp. 113-120, June 9-11, 2010, Boston, MA, USA

This page is editable only by TC11 Officers .